GoodVision AI, an AI infrastructure company led by former AWS and IBM executives, today announced the launch of an intelligent compute scheduling solution combined with distributed edge inference infrastructure. The platform is designed to address increasing token consumption, latency, and cost challenges driven by the rapid adoption of AI agents.

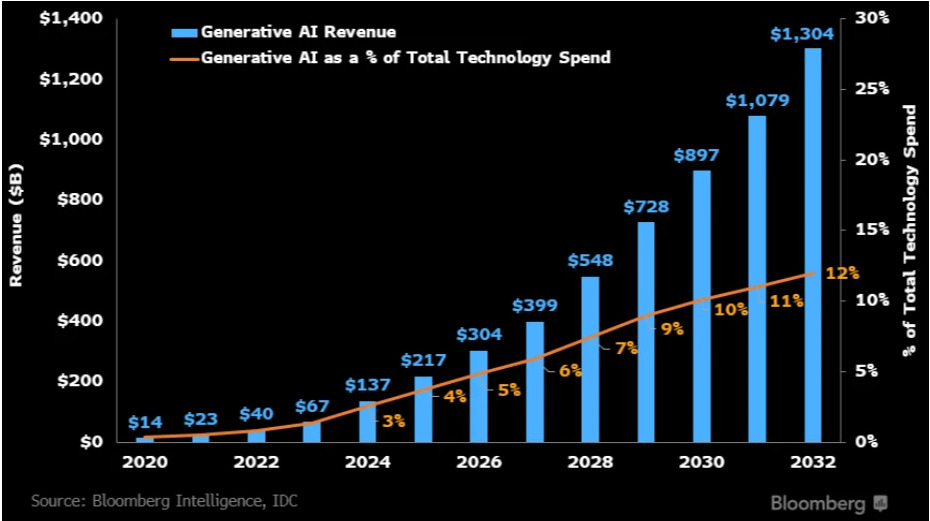

The announcement comes amid a broader shift in AI infrastructure. At GTC 2026, NVIDIA CEO Jensen Huang stated that AI systems are evolving from traditional “data centers” into “token factories,” where inference throughput is becoming a primary performance metric. He indicated that inference demand could grow by multiple orders of magnitude, potentially reaching a million-fold increase within the next two years.

At the same time, new classes of AI systems — such as agent-based platforms capable of understanding user intent, maintaining long-term memory, invoking external tools, and executing multi-step workflows — are accelerating demand for inference. These systems introduce a new constraint around token consumption, as complex tasks may require hundreds of model calls, significantly increasing usage compared to traditional prompt-response interactions. Industry observations indicate that agent-driven workflows can lead to substantial increases in token expenditure, with some high-usage environments reaching extremely large daily consumption levels.

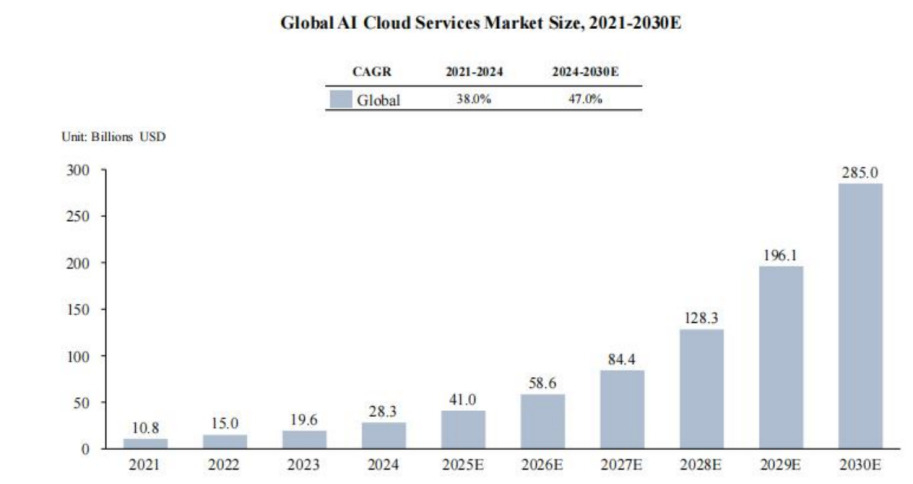

In response, hyperscale cloud providers are increasing capital expenditures to expand AI infrastructure capacity, with combined planned investments exceeding $280 billion in 2026. These investments are largely focused on securing power resources and scaling compute capacity. However, the rapid growth in demand raises a broader industry question as to whether centralized infrastructure expansion alone can address real-world challenges related to efficiency, cost, and latency.

Addressing Compute Congestion Through Intelligent Allocation

GoodVision AI was founded in 2019 based on the observation that application demand in cloud computing environments consistently scales faster than available compute supply. This structural imbalance has become more pronounced with the rapid proliferation of large models and AI applications.

The company reports that its AI-related revenue reached nearly $10 million in 2025, with over 100% year-over-year growth. With the continued rollout of its AI Factory and broader infrastructure platform, GoodVision AI expects total AI revenue to scale to hundreds of millions of dollars by 2027.

As AI applications are deployed across millions of users, inference workloads are increasingly distributed across geographies, devices, and network environments. Existing cloud architectures — primarily designed for centralized workloads—are not optimized for this demand pattern. As a result, rising latency, increasing costs, and reduced output reliability are emerging in production environments.

GoodVision AI’s platform is designed around a distributed and hierarchical compute model:

- Centralized cloud infrastructure for complex, high-value workloads

- Edge and localized compute for high-frequency, latency-sensitive inference

- Intelligent scheduling to dynamically route workloads based on task requirements

This approach enables workloads of varying complexity to be allocated to the most appropriate compute resources in real time, reducing congestion in centralized data centers while improving performance and cost efficiency.

Positioning as a Compute Distribution Network

The current AI infrastructure landscape includes hyperscalers, GPU-focused cloud providers, and model service platforms. While each addresses a layer of the stack, none fully resolves the challenges associated with large-scale, distributed inference.

Hyperscalers rely on centralized data centers, which may become inefficient for geographically distributed and latency-sensitive workloads. GPU cloud providers expand compute supply but lack orchestration capabilities, while model routing platforms enable flexibility across models without controlling underlying infrastructure.

GoodVision AI positions its platform as an intelligent compute distribution network designed to orchestrate inference workloads across heterogeneous environments. The system dynamically allocates tasks to the most suitable compute resources in real time, addressing both performance and cost constraints.

AI Factory Architecture and Token-Level Scheduling

GoodVision AI’s infrastructure, referred to internally as the AI Factory, combines GPU compute resources with a globally distributed network of compute nodes and an intelligent scheduling layer.

The platform includes a proprietary orchestration system that functions as a control plane for workload management, along with a token aggregation layer. Unlike API-based routing platforms, GoodVision AI integrates owned compute infrastructure and deployable private model clusters, enabling direct control over resource allocation.

A core innovation is token-level compute scheduling, which allows workloads to be routed based on task complexity, latency sensitivity, and cost considerations. This enables real-time optimization across public cloud environments, private data centers, and distributed edge nodes.

The company is also deploying edge compute infrastructure to bring inference closer to end users. This reduces latency and improves responsiveness for real-time applications, enabling compute delivery models similar to content delivery networks.

Global Expansion and Infrastructure Scale

Since 2025, GoodVision AI has expanded its inference compute footprint across Asia and globally, with Japan, South Korea, and the United States serving as key strategic hubs. The company has secured more than 400 MW of power capacity and plans to scale its infrastructure to support up to 400,000 inference GPUs.

These resources are integrated into a vertically managed platform spanning infrastructure development, operations, and workload distribution. Owned compute capacity provides resilience during periods of supply constraint and enables more efficient scheduling across the network.

Future Outlook: Distributed AI Infrastructure

As AI agents become embedded in enterprise systems, consumer applications, and physical environments, demand for continuous inference is expected to grow significantly. In parallel, AI infrastructure is evolving toward a distributed model, where compute resources are allocated dynamically across a global network.

GoodVision AI’s AI Factory concept reflects this shift, with localized inference hubs designed to serve regional demand while remaining interconnected within a broader compute network. These deployments enable real-time inference to be processed closer to end users, improving performance and efficiency.

In existing deployments, customers utilizing GoodVision AI’s infrastructure have reported approximately 60% reductions in cost, 50% lower latency, and around 50% improvements in platform gross margins.

The company is expanding into compute-intensive sectors including video generation and biotechnology, where large-scale, low-latency inference is critical. As these industries continue to adopt AI, demand for distributed, scalable infrastructure is expected to increase.

GoodVision AI anticipates that AI infrastructure will continue to evolve toward a globally distributed model, where compute becomes a foundational utility accessible to enterprises, developers, and users across industries.

About GoodVision AI

GoodVision AI is an AI infrastructure company led by former AWS and IBM executives, building intelligent compute scheduling and distributed edge inference systems for the next generation of AI applications. Founded in 2019, the company’s platform helps enterprises address challenges around token consumption, latency, and cost as AI workloads scale. GoodVision AI combines centralized cloud infrastructure, localized edge compute, and proprietary orchestration technology to dynamically route workloads based on complexity, latency sensitivity, and cost. With infrastructure expansion across Asia and the United States, the company is building a globally distributed compute network to support real-time, large-scale AI inference.

Media Contact

Joy Chen

media@goodvision.ai